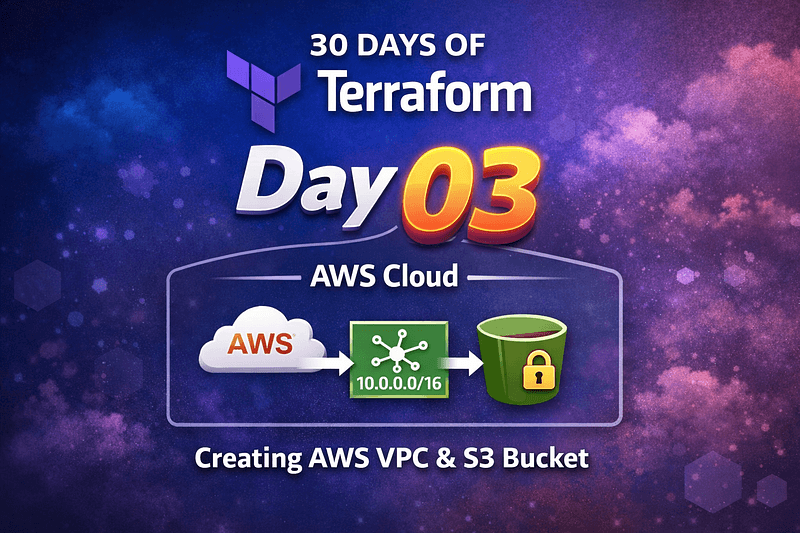

Day 3 of Terraform: When Infrastructure Becomes Predictable

Diving into Cloud and DevOps like a kid who learned cycling yesterday and signed up for a race today. I break things, read error logs like bedtime stories, and slowly figure out how not to repeat the same mistakes. If you enjoy honest tech journeys with a bit of humor, you’ll fit right in.

#30daysofawsterraform

By Day 3 of working with Terraform, the focus naturally shifts from writing configuration to understanding behavior. Provisioning an S3 bucket or a VPC is not the hard part. The real learning starts when something fails, partially succeeds, or behaves differently than expected.

This day was about building AWS infrastructure with Terraform, but more importantly, about understanding how Terraform thinks: how it plans, applies, tracks state, and reacts to failure. That mental model is what separates copy-paste infrastructure from reliable Infrastructure as Code.

The Architecture: Simple by Design, Intentional by Choice

The architecture for Day 3 was deliberately minimal:

An AWS VPC, representing foundational network infrastructure

A private S3 bucket, representing a globally scoped AWS resource

Terraform state managing both resources

Terraform acting as the control plane, provisioning and managing AWS VPC and S3 resources through the AWS provider.

There is no direct dependency between a VPC and an S3 bucket, and that’s precisely why this setup is useful. It allows Terraform’s execution model to be observed clearly. Each resource exists independently, yet both are governed by the same state file. That shared state is what ties everything together.

Terraform’s job is not to “run commands” against AWS. Its job is to continuously reconcile three things: configuration, state, and real infrastructure. Once you see Terraform through that lens, many confusing behaviors start making sense.

Writing the Configuration

The starting point was straightforward. An AWS provider and a basic VPC definition:

provider "aws" {

region = "us-east-1"

}

resource "aws_vpc" "example" {

cidr_block = "10.0.0.0/16"

}

Nothing unusual here. The complexity emerged with S3.

Unlike most AWS resources, S3 buckets live in a global namespace. A name that looks unique locally may already exist somewhere else in the world. This is where many Terraform beginners get stuck, and where Terraform’s behavior becomes educational.

An initial hardcoded bucket name predictably failed with aBucketAlreadyExists error. Terraform stopped execution, but it did not roll anything back. The VPC, if already created, remained. This is not a bug. It’s a design decision.

Terraform is declarative, not transactional.

Solving the S3 Naming Problem Properly

The correct solution is not to keep guessing bucket names. The correct solution is to design uniqueness into the configuration.

Terraform provides a clean way to do this using the random provider:

resource "random_id" "suffix" {byte_length = 4}

resource "aws_s3_bucket" "example" {

bucket = "aditya-tf-day3-${random_id.suffix.hex}"

tags = {

Name = "My bucket"

Environment = "Dev"

ManagedBy = "Terraform"

}

}

This approach guarantees global uniqueness while keeping names readable and predictable. The important detail is that random_id is stable once created. Terraform does not regenerate it on every apply. That stability is what allows consistent infrastructure over time.

Adding this resource also surfaced another subtle concept: provider management. Introducing random_id required running terraform init -upgrade to sync the dependency lock file. Terraform is strict here by design, and that strictness pays off in long-term reproducibility.

State Is the Real Engine

One of the most important lessons from Day 3 was how Terraform state behaves during partial failures.

If Terraform successfully creates a VPC but fails to create an S3 bucket, the state file reflects exactly that. On the next run, Terraform does not “start over.” It resumes. The plan only includes what is missing or drifted.

The same applies to updates. When tags were added to the existing VPC, Terraform detected the difference and planned an in-place update. No recreation. No downtime. Just a controlled change reflected cleanly in state after apply.

This is why terraform plan is non-negotiable. It is not a suggestion. It is the single most accurate description of what Terraform is about to do.

Destruction Is Just Another State Transition

Running terraform destroy is not a special mode. It is simply another plan, with the desired end state being “nothing.” Terraform destroys only what exists in state, in a safe order, with explicit confirmation.

That predictability is what makes Terraform safe to use at scale.

Takeaway

Day 3 reinforced a critical truth: Terraform is less about writing .tf files and more about understanding state-driven change.

By working through real failures, name collisions, provider mismatches, and in-place updates, the infrastructure stopped feeling magical and started feeling mechanical — in the best possible way. Once you trust the plan, respect the state, and design for uniqueness and clarity, Terraform becomes a reliable system rather than a fragile tool.

That shift in mindset is where real DevOps learning begins.